Cifar10

On this page

Cifar10#

This page gives a quick introduction to OpenPifPaf’s Cifar10 plugin that is part of openpifpaf.plugins.

It demonstrates the plugin architecture.

There already is a nice dataset for CIFAR10 in torchvision and a related PyTorch tutorial.

The plugin adds a DataModule that uses this dataset.

Let’s start with them setup for this notebook and registering all available OpenPifPaf plugins:

print(openpifpaf.plugin.REGISTERED.keys())

dict_keys(['openpifpaf.plugins.animalpose', 'openpifpaf.plugins.apollocar3d', 'openpifpaf.plugins.cifar10', 'openpifpaf.plugins.coco', 'openpifpaf.plugins.crowdpose', 'openpifpaf.plugins.nuscenes', 'openpifpaf.plugins.posetrack', 'openpifpaf.plugins.wholebody'])

Next, we configure and instantiate the Cifar10 datamodule and look at the configured head metas:

# configure

openpifpaf.plugins.cifar10.datamodule.Cifar10.debug = True

openpifpaf.plugins.cifar10.datamodule.Cifar10.batch_size = 1

# instantiate and inspect

datamodule = openpifpaf.plugins.cifar10.datamodule.Cifar10()

datamodule.set_loader_workers(0) # no multi-processing to see debug outputs in main thread

datamodule.head_metas

[CifDet(name='cifdet', dataset='cifar10', head_index=None, base_stride=None, upsample_stride=1, categories=('plane', 'car', 'bird', 'cat', 'deer', 'dog', 'frog', 'horse', 'ship', 'truck'), training_weights=None)]

We see here that CIFAR10 is being treated as a detection dataset (CifDet) and has 10 categories.

To create a network, we use the factory() function that takes the name of the base network cifar10net and the list of head metas.

net = openpifpaf.network.Factory(base_name='cifar10net').factory(head_metas=datamodule.head_metas)

We can inspect the training data that is returned from datamodule.train_loader():

# configure visualization

openpifpaf.visualizer.Base.set_all_indices(['cifdet:9:regression']) # category 9 = truck

# Create a wrapper for a data loader that iterates over a set of matplotlib axes.

# The only purpose is to set a different matplotlib axis before each call to

# retrieve the next image from the data_loader so that it produces multiple

# debug images in one canvas side-by-side.

def loop_over_axes(axes, data_loader):

previous_common_ax = openpifpaf.visualizer.Base.common_ax

train_loader_iter = iter(data_loader)

for ax in axes.reshape(-1):

openpifpaf.visualizer.Base.common_ax = ax

yield next(train_loader_iter, None)

openpifpaf.visualizer.Base.common_ax = previous_common_ax

# create a canvas and loop over the first few entries in the training data

with openpifpaf.show.canvas(ncols=6, nrows=3, figsize=(10, 5)) as axs:

for images, targets, meta in loop_over_axes(axs, datamodule.train_loader()):

pass

Training#

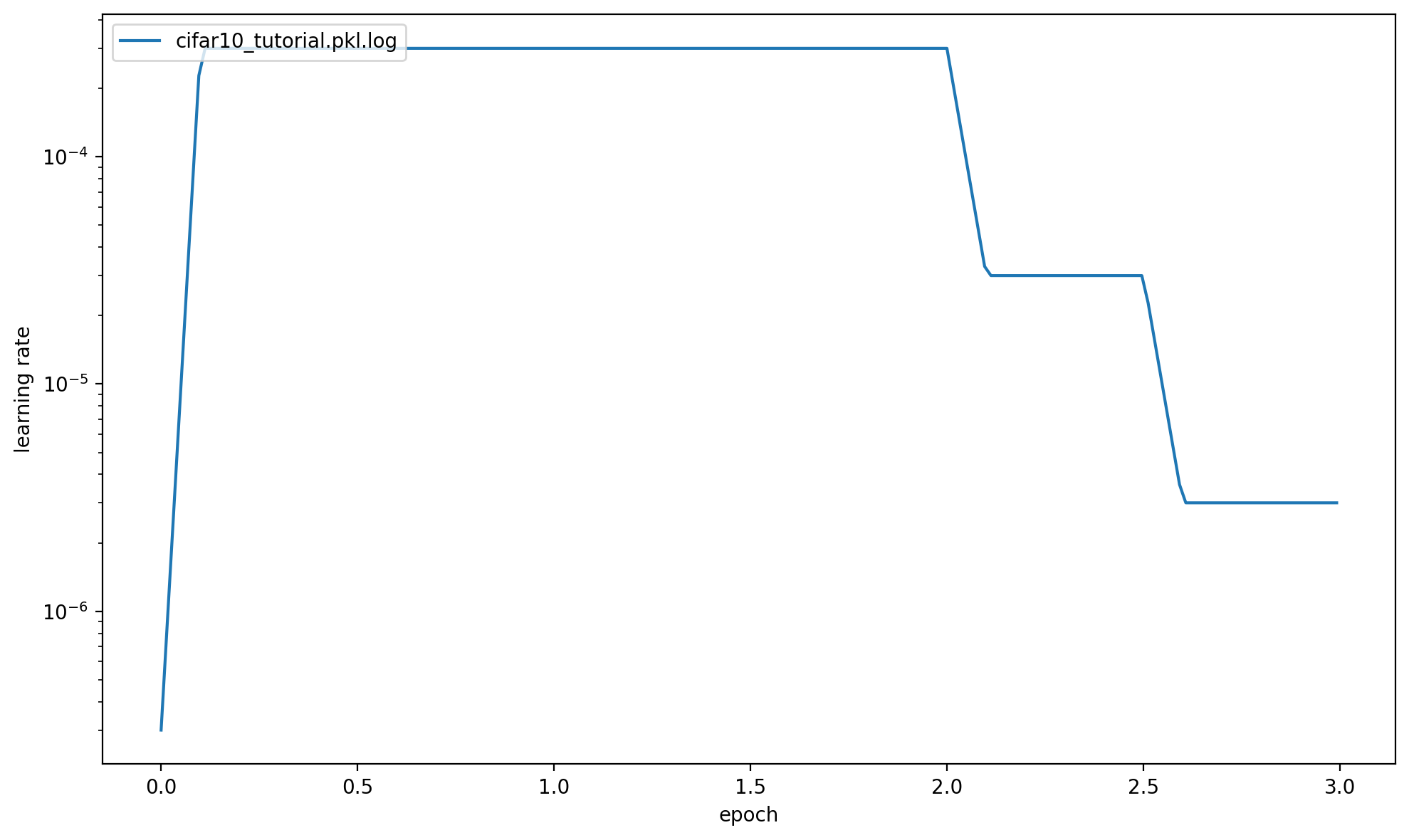

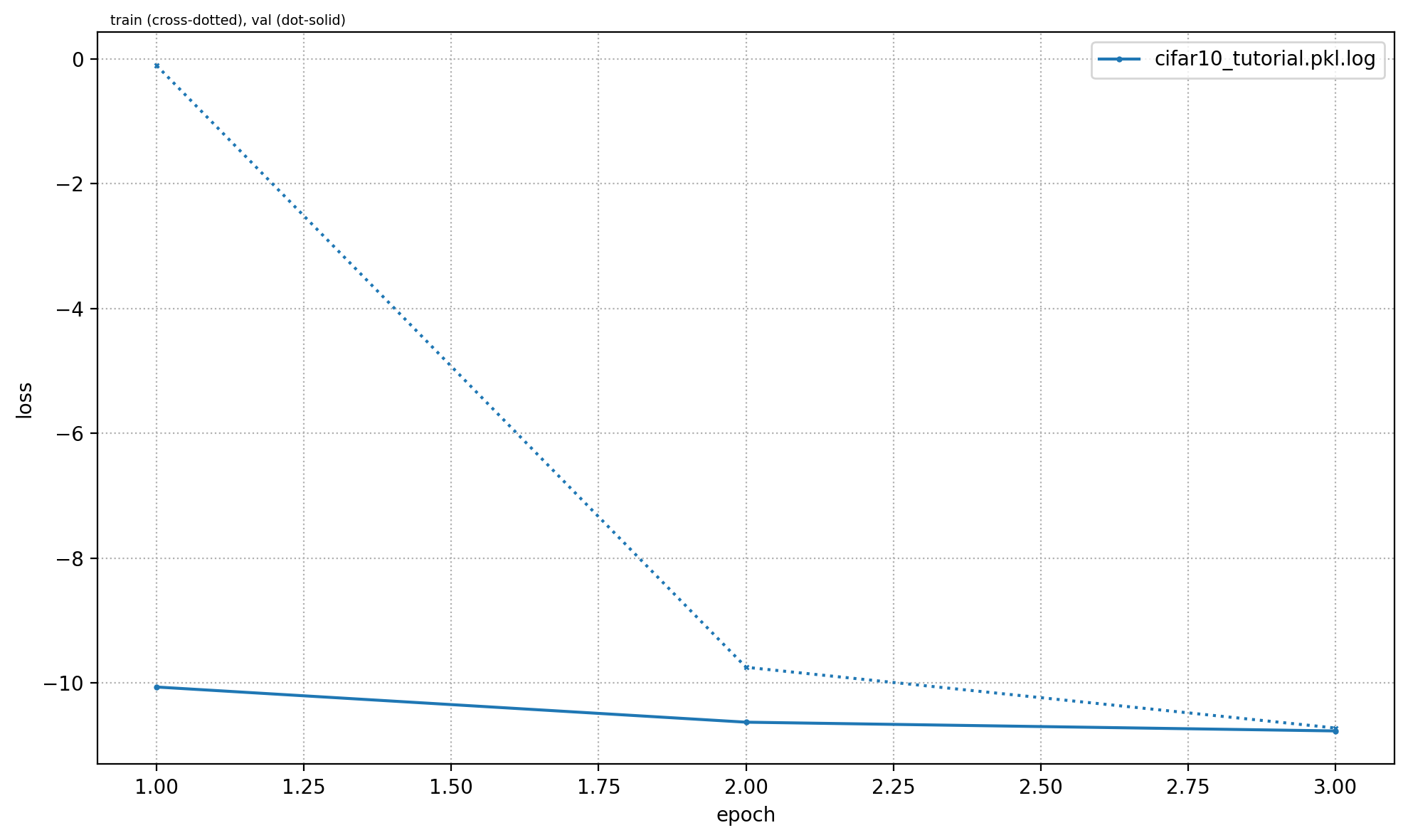

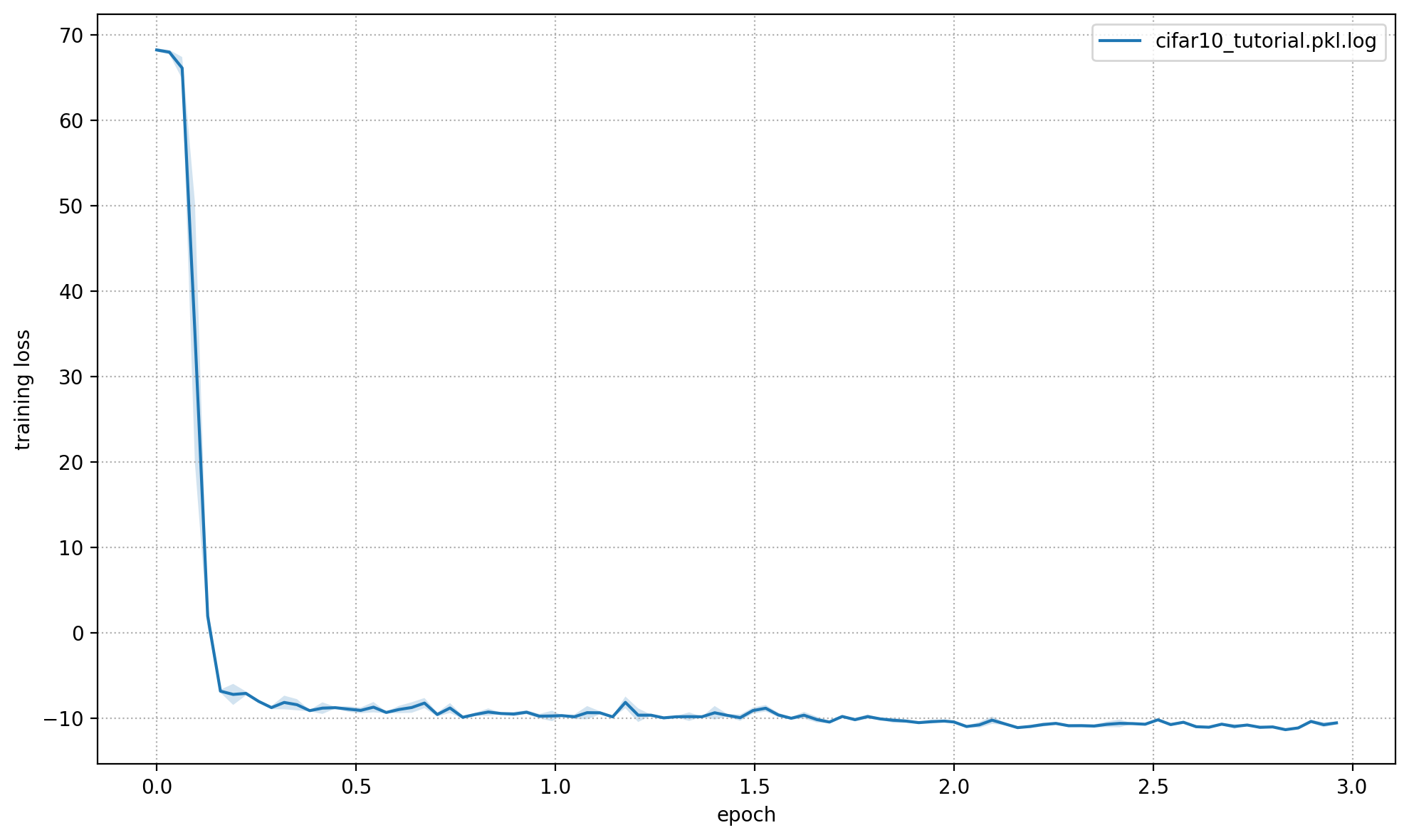

We train a very small network, cifar10net, for only one epoch. Afterwards, we will investigate its predictions.

%%bash

python -m openpifpaf.train \

--dataset=cifar10 --basenet=cifar10net --log-interval=50 \

--epochs=3 --lr=0.0003 --momentum=0.95 --batch-size=16 \

--lr-warm-up-epochs=0.1 --lr-decay 2.0 2.5 --lr-decay-epochs=0.1 \

--loader-workers=2 --output=cifar10_tutorial.pkl

INFO:__main__:neural network device: cpu (CUDA available: False, count: 0)

INFO:__main__:Running Python 3.10.13

INFO:__main__:Running PyTorch 2.2.1+cpu

INFO:openpifpaf.network.basenetworks:cifar10net: stride = 16, output features = 128

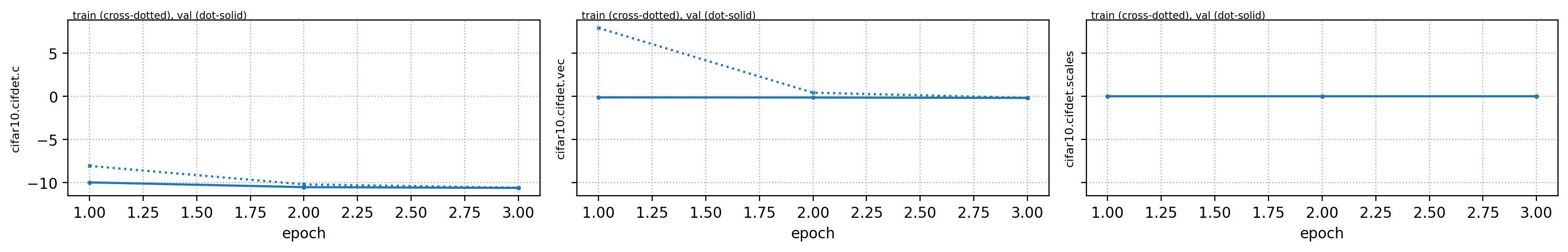

INFO:openpifpaf.network.losses.multi_head:multihead loss: ['cifar10.cifdet.c', 'cifar10.cifdet.vec', 'cifar10.cifdet.scales'], [1.0, 1.0, 1.0]

INFO:openpifpaf.logger:{'type': 'process', 'argv': ['/opt/hostedtoolcache/Python/3.10.13/x64/lib/python3.10/site-packages/openpifpaf/train.py', '--dataset=cifar10', '--basenet=cifar10net', '--log-interval=50', '--epochs=3', '--lr=0.0003', '--momentum=0.95', '--batch-size=16', '--lr-warm-up-epochs=0.1', '--lr-decay', '2.0', '2.5', '--lr-decay-epochs=0.1', '--loader-workers=2', '--output=cifar10_tutorial.pkl'], 'args': {'output': 'cifar10_tutorial.pkl', 'disable_cuda': False, 'ddp': False, 'local_rank': None, 'sync_batchnorm': True, 'resume_training': None, 'quiet': False, 'debug': False, 'log_stats': False, 'shufflenetv2_pretrained': True, 'squeezenet_pretrained': True, 'shufflenetv2k_input_conv2_stride': 0, 'shufflenetv2k_input_conv2_outchannels': None, 'shufflenetv2k_stage4_dilation': 1, 'shufflenetv2k_kernel': 5, 'shufflenetv2k_conv5_as_stage': False, 'shufflenetv2k_instance_norm': False, 'shufflenetv2k_group_norm': False, 'shufflenetv2k_leaky_relu': False, 'mobilenetv2_pretrained': True, 'mobilenetv3_pretrained': True, 'resnet_pretrained': True, 'resnet_pool0_stride': 0, 'resnet_input_conv_stride': 2, 'resnet_input_conv2_stride': 0, 'resnet_block5_dilation': 1, 'resnet_remove_last_block': False, 'hrformer_scale_level': 0, 'hrformer_pretrained': True, 'clipconvnext_pretrained': True, 'convnextv2_pretrained': True, 'xcit_out_channels': None, 'xcit_out_maxpool': False, 'xcit_pretrained': True, 'swin_drop_path_rate': 0.2, 'swin_input_upsample': False, 'swin_use_fpn': False, 'swin_fpn_out_channels': None, 'swin_fpn_level': 3, 'swin_pretrained': True, 'cf4_dropout': 0.0, 'cf4_inplace_ops': True, 'checkpoint': None, 'basenet': 'cifar10net', 'cross_talk': 0.0, 'download_progress': True, 'head_consolidation': 'filter_and_extend', 'lambdas': None, 'component_lambdas': None, 'auto_tune_mtl': False, 'auto_tune_mtl_variance': False, 'task_sparsity_weight': 0.0, 'loss_prescale': 1.0, 'regression_loss': 'laplace', 'bce_total_soft_clamp': None, 'soft_clamp': 5.0, 'laplace_soft_clamp': 5.0, 'background_weight': 1.0, 'focal_alpha': 0.5, 'focal_gamma': 1.0, 'focal_detach': False, 'focal_clamp': True, 'bce_min': 0.0, 'bce_soft_clamp': 5.0, 'bce_background_clamp': -15.0, 'b_scale': 1.0, 'scale_log': False, 'scale_soft_clamp': 5.0, 'r_smooth': 0.0, 'epochs': 3, 'train_batches': None, 'val_batches': None, 'clip_grad_norm': 0.0, 'clip_grad_value': 0.0, 'log_interval': 50, 'val_interval': 1, 'stride_apply': 1, 'fix_batch_norm': False, 'ema': 0.01, 'profile': None, 'cif_side_length': 4, 'caf_min_size': 3, 'caf_fixed_size': False, 'caf_aspect_ratio': 0.0, 'encoder_suppress_selfhidden': True, 'encoder_suppress_invisible': False, 'encoder_suppress_collision': False, 'momentum': 0.95, 'beta2': 0.999, 'adam_eps': 1e-06, 'nesterov': True, 'weight_decay': 0.0, 'adam': False, 'adamw': False, 'amsgrad': False, 'lr': 0.0003, 'lr_decay_type': 'step', 'lr_decay': [2.0, 2.5], 'lr_decay_factor': 0.1, 'lr_decay_epochs': 0.1, 'lr_warm_up_type': 'exp', 'lr_warm_up_start_epoch': 0, 'lr_warm_up_epochs': 0.1, 'lr_warm_up_factor': 0.001, 'lr_warm_restarts': [], 'lr_warm_restart_duration': 0.5, 'dataset': 'cifar10', 'loader_workers': 2, 'batch_size': 16, 'dataset_weights': None, 'animal_train_annotations': 'data-animalpose/annotations/animal_keypoints_20_train.json', 'animal_val_annotations': 'data-animalpose/annotations/animal_keypoints_20_val.json', 'animal_train_image_dir': 'data-animalpose/images/train/', 'animal_val_image_dir': 'data-animalpose/images/val/', 'animal_square_edge': 513, 'animal_extended_scale': False, 'animal_orientation_invariant': 0.0, 'animal_blur': 0.0, 'animal_augmentation': True, 'animal_rescale_images': 1.0, 'animal_upsample': 1, 'animal_min_kp_anns': 1, 'animal_bmin': 1, 'animal_eval_test2017': False, 'animal_eval_testdev2017': False, 'animal_eval_annotation_filter': True, 'animal_eval_long_edge': 0, 'animal_eval_extended_scale': False, 'animal_eval_orientation_invariant': 0.0, 'apollo_train_annotations': 'data-apollocar3d/annotations/apollo_keypoints_66_train.json', 'apollo_val_annotations': 'data-apollocar3d/annotations/apollo_keypoints_66_val.json', 'apollo_train_image_dir': 'data-apollocar3d/images/train/', 'apollo_val_image_dir': 'data-apollocar3d/images/val/', 'apollo_square_edge': 513, 'apollo_extended_scale': False, 'apollo_orientation_invariant': 0.0, 'apollo_blur': 0.0, 'apollo_augmentation': True, 'apollo_rescale_images': 1.0, 'apollo_upsample': 1, 'apollo_min_kp_anns': 1, 'apollo_bmin': 1, 'apollo_apply_local_centrality': False, 'apollo_eval_annotation_filter': True, 'apollo_eval_long_edge': 0, 'apollo_eval_extended_scale': False, 'apollo_eval_orientation_invariant': 0.0, 'apollo_use_24_kps': False, 'cifar10_root_dir': 'data-cifar10/', 'cifar10_download': False, 'cocodet_train_annotations': 'data-mscoco/annotations/instances_train2017.json', 'cocodet_val_annotations': 'data-mscoco/annotations/instances_val2017.json', 'cocodet_train_image_dir': 'data-mscoco/images/train2017/', 'cocodet_val_image_dir': 'data-mscoco/images/val2017/', 'cocodet_square_edge': 513, 'cocodet_extended_scale': False, 'cocodet_orientation_invariant': 0.0, 'cocodet_blur': 0.0, 'cocodet_augmentation': True, 'cocodet_rescale_images': 1.0, 'cocodet_upsample': 1, 'cocokp_train_annotations': 'data-mscoco/annotations/person_keypoints_train2017.json', 'cocokp_val_annotations': 'data-mscoco/annotations/person_keypoints_val2017.json', 'cocokp_train_image_dir': 'data-mscoco/images/train2017/', 'cocokp_val_image_dir': 'data-mscoco/images/val2017/', 'cocokp_square_edge': 385, 'cocokp_with_dense': False, 'cocokp_extended_scale': False, 'cocokp_orientation_invariant': 0.0, 'cocokp_blur': 0.0, 'cocokp_augmentation': True, 'cocokp_rescale_images': 1.0, 'cocokp_upsample': 1, 'cocokp_min_kp_anns': 1, 'cocokp_bmin': 0.1, 'cocokp_eval_test2017': False, 'cocokp_eval_testdev2017': False, 'coco_eval_annotation_filter': True, 'coco_eval_long_edge': 641, 'coco_eval_extended_scale': False, 'coco_eval_orientation_invariant': 0.0, 'crowdpose_train_annotations': 'data-crowdpose/json/crowdpose_train.json', 'crowdpose_val_annotations': 'data-crowdpose/json/crowdpose_val.json', 'crowdpose_image_dir': 'data-crowdpose/images/', 'crowdpose_square_edge': 385, 'crowdpose_extended_scale': False, 'crowdpose_orientation_invariant': 0.0, 'crowdpose_augmentation': True, 'crowdpose_rescale_images': 1.0, 'crowdpose_upsample': 1, 'crowdpose_min_kp_anns': 1, 'crowdpose_eval_test': False, 'crowdpose_eval_long_edge': 641, 'crowdpose_eval_extended_scale': False, 'crowdpose_eval_orientation_invariant': 0.0, 'crowdpose_index': None, 'nuscenes_train_annotations': '../../../NuScenes/mscoco_style_annotations/nuimages_v1.0-train.json', 'nuscenes_val_annotations': '../../../NuScenes/mscoco_style_annotations/nuimages_v1.0-val.json', 'nuscenes_train_image_dir': '../../../NuScenes/nuimages-v1.0-all-samples', 'nuscenes_val_image_dir': '../../../NuScenes/nuimages-v1.0-all-samples', 'nuscenes_square_edge': 513, 'nuscenes_extended_scale': False, 'nuscenes_orientation_invariant': 0.0, 'nuscenes_blur': 0.0, 'nuscenes_augmentation': True, 'nuscenes_rescale_images': 1.0, 'nuscenes_upsample': 1, 'posetrack2018_train_annotations': 'data-posetrack2018/annotations/train/*.json', 'posetrack2018_val_annotations': 'data-posetrack2018/annotations/val/*.json', 'posetrack2018_eval_annotations': 'data-posetrack2018/annotations/val/*.json', 'posetrack2018_data_root': 'data-posetrack2018', 'posetrack_square_edge': 385, 'posetrack_with_dense': False, 'posetrack_augmentation': True, 'posetrack_rescale_images': 1.0, 'posetrack_upsample': 1, 'posetrack_min_kp_anns': 1, 'posetrack_bmin': 0.1, 'posetrack_sample_pairing': 0.0, 'posetrack_image_augmentations': 0.0, 'posetrack_max_shift': 30.0, 'posetrack_eval_long_edge': 801, 'posetrack_eval_extended_scale': False, 'posetrack_eval_orientation_invariant': 0.0, 'posetrack_ablation_without_tcaf': False, 'posetrack2017_eval_annotations': 'data-posetrack2017/annotations/val/*.json', 'posetrack2017_data_root': 'data-posetrack2017', 'cocokpst_max_shift': 30.0, 'wholebody_train_annotations': 'data-mscoco/annotations/person_keypoints_train2017_wholebody_pifpaf_style.json', 'wholebody_val_annotations': 'data-mscoco/annotations/coco_wholebody_val_v1.0.json', 'wholebody_train_image_dir': 'data-mscoco/images/train2017/', 'wholebody_val_image_dir': 'data-mscoco/images/val2017', 'wholebody_square_edge': 385, 'wholebody_extended_scale': False, 'wholebody_orientation_invariant': 0.0, 'wholebody_blur': 0.0, 'wholebody_augmentation': True, 'wholebody_rescale_images': 1.0, 'wholebody_upsample': 1, 'wholebody_min_kp_anns': 1, 'wholebody_bmin': 1.0, 'wholebody_apply_local_centrality': False, 'wholebody_eval_test2017': False, 'wholebody_eval_testdev2017': False, 'wholebody_eval_annotation_filter': True, 'wholebody_eval_long_edge': 641, 'wholebody_eval_extended_scale': False, 'wholebody_eval_orientation_invariant': 0.0, 'save_all': None, 'show': False, 'image_width': None, 'image_height': None, 'image_dpi_factor': 2.0, 'image_min_dpi': 50.0, 'show_file_extension': 'jpeg', 'textbox_alpha': 0.5, 'text_color': 'white', 'font_size': 8, 'monocolor_connections': False, 'line_width': None, 'skeleton_solid_threshold': 0.5, 'show_box': False, 'white_overlay': False, 'show_joint_scales': False, 'show_joint_confidences': False, 'show_decoding_order': False, 'show_frontier_order': False, 'show_only_decoded_connections': False, 'video_fps': 10, 'video_dpi': 100, 'debug_indices': [], 'device': device(type='cpu'), 'pin_memory': False}, 'version': '0.14.2', 'plugin_versions': {}, 'hostname': 'fv-az1206-187'}

INFO:openpifpaf.optimize:SGD optimizer

INFO:openpifpaf.optimize:training batches per epoch = 3125

INFO:openpifpaf.network.trainer:{'type': 'config', 'field_names': ['cifar10.cifdet.c', 'cifar10.cifdet.vec', 'cifar10.cifdet.scales']}

INFO:openpifpaf.network.trainer:model written: cifar10_tutorial.pkl.epoch000

INFO:openpifpaf.network.trainer:training state written: cifar10_tutorial.pkl.optim.epoch000

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 0, 'n_batches': 3125, 'time': 0.022, 'data_time': 0.081, 'lr': 3e-07, 'loss': 68.034, 'head_losses': [2.019, 66.015, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 50, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 9.1e-07, 'loss': 68.415, 'head_losses': [1.925, 66.49, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 100, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 2.74e-06, 'loss': 68.224, 'head_losses': [1.974, 66.25, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 150, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 8.26e-06, 'loss': 67.708, 'head_losses': [2.065, 65.644, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 200, 'n_batches': 3125, 'time': 0.01, 'data_time': 0.003, 'lr': 2.495e-05, 'loss': 67.398, 'head_losses': [2.039, 65.359, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 250, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 7.536e-05, 'loss': 64.822, 'head_losses': [2.398, 62.424, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 300, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.00022757, 'loss': 50.394, 'head_losses': [5.686, 44.708, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 350, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': 19.843, 'head_losses': [-1.851, 21.695, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 400, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': 3.319, 'head_losses': [-6.364, 9.683, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 450, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': 0.635, 'head_losses': [-8.08, 8.715, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 500, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -6.639, 'head_losses': [-8.414, 1.775, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 550, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -6.99, 'head_losses': [-8.9, 1.91, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 600, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -5.963, 'head_losses': [-8.851, 2.888, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 650, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.43, 'head_losses': [-9.166, 0.736, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 700, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -6.845, 'head_losses': [-8.991, 2.145, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 750, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -7.308, 'head_losses': [-8.846, 1.538, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 800, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -7.952, 'head_losses': [-8.957, 1.005, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 850, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.003, 'lr': 0.0003, 'loss': -8.078, 'head_losses': [-9.301, 1.223, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 900, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.927, 'head_losses': [-9.408, 0.481, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 950, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.549, 'head_losses': [-9.246, 0.697, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1000, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -7.342, 'head_losses': [-9.309, 1.968, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1050, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.934, 'head_losses': [-9.043, 0.108, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1100, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -7.749, 'head_losses': [-9.057, 1.308, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1150, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.055, 'head_losses': [-9.346, 0.291, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1200, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.012, 'head_losses': [-9.414, 0.402, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1250, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.178, 'head_losses': [-9.269, 0.091, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1300, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.481, 'head_losses': [-9.49, 0.009, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1350, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.119, 'head_losses': [-9.371, 1.252, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1400, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.846, 'head_losses': [-9.37, 0.525, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1450, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.65, 'head_losses': [-9.476, 0.826, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1500, 'n_batches': 3125, 'time': 0.012, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.237, 'head_losses': [-9.361, 0.124, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1550, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.587, 'head_losses': [-9.426, 0.839, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1600, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.395, 'head_losses': [-9.346, -0.049, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1650, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.735, 'head_losses': [-9.511, 0.776, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1700, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.002, 'lr': 0.0003, 'loss': -8.079, 'head_losses': [-9.242, 1.162, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1750, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.297, 'head_losses': [-9.382, 0.085, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1800, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.209, 'head_losses': [-9.298, 0.09, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1850, 'n_batches': 3125, 'time': 0.007, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.412, 'head_losses': [-9.421, 0.009, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1900, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.533, 'head_losses': [-9.52, 0.988, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 1950, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.389, 'head_losses': [-9.419, 0.03, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2000, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.108, 'head_losses': [-9.198, 1.09, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2050, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.348, 'head_losses': [-9.772, 0.424, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2100, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -7.614, 'head_losses': [-9.667, 2.053, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2150, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.804, 'head_losses': [-9.8, 0.996, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2200, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.769, 'head_losses': [-9.924, 0.155, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2250, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.325, 'head_losses': [-9.629, 0.304, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2300, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.205, 'head_losses': [-9.416, 1.21, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2350, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.367, 'head_losses': [-9.803, 0.436, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2400, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.695, 'head_losses': [-9.782, 0.087, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2450, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.049, 'head_losses': [-10.263, 0.214, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2500, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.712, 'head_losses': [-9.943, 0.231, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2550, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.35, 'head_losses': [-9.501, 0.151, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2600, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.682, 'head_losses': [-9.896, 0.213, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2650, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.834, 'head_losses': [-9.753, 0.92, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2700, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.527, 'head_losses': [-9.86, 0.333, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2750, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.338, 'head_losses': [-9.697, 0.359, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2800, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.735, 'head_losses': [-9.774, 0.04, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2850, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.247, 'head_losses': [-9.773, 0.526, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2900, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.052, 'head_losses': [-10.035, 0.984, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 2950, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.508, 'head_losses': [-10.0, 0.492, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 3000, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.479, 'head_losses': [-9.79, 0.31, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 3050, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.003, 'head_losses': [-10.132, 0.129, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 0, 'batch': 3100, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.103, 'head_losses': [-9.636, 0.533, 0.0]}

INFO:openpifpaf.network.trainer:applying ema

INFO:openpifpaf.network.trainer:{'type': 'train-epoch', 'epoch': 1, 'loss': -0.10601, 'head_losses': [-8.04568, 7.93966, 0.0], 'time': 34.5, 'n_clipped_grad': 0, 'max_norm': 0.0}

INFO:openpifpaf.network.trainer:model written: cifar10_tutorial.pkl.epoch001

INFO:openpifpaf.network.trainer:training state written: cifar10_tutorial.pkl.optim.epoch001

INFO:openpifpaf.network.trainer:{'type': 'val-epoch', 'epoch': 1, 'loss': -10.06269, 'head_losses': [-9.95204, -0.11065, 0.0], 'time': 5.1}

INFO:openpifpaf.network.trainer:restoring params from before ema

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 0, 'n_batches': 3125, 'time': 0.017, 'data_time': 0.055, 'lr': 0.0003, 'loss': -10.329, 'head_losses': [-10.27, -0.059, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 50, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.625, 'head_losses': [-10.038, 0.413, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 100, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.729, 'head_losses': [-9.85, 0.12, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 150, 'n_batches': 3125, 'time': 0.012, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.077, 'head_losses': [-10.141, 0.063, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 200, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.546, 'head_losses': [-9.661, 0.115, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 250, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.11, 'head_losses': [-10.364, 0.254, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 300, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.559, 'head_losses': [-9.357, 0.799, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 350, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.534, 'head_losses': [-9.987, 0.453, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 400, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.162, 'head_losses': [-9.726, 0.564, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 450, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.732, 'head_losses': [-9.876, 0.144, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 500, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.916, 'head_losses': [-10.117, 0.201, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 550, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -7.439, 'head_losses': [-9.847, 2.407, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 600, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.814, 'head_losses': [-9.082, 0.269, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 650, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.426, 'head_losses': [-10.542, 0.115, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 700, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.826, 'head_losses': [-10.237, 1.411, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 750, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.562, 'head_losses': [-9.99, 0.428, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 800, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.689, 'head_losses': [-10.145, 0.456, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 850, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -10.045, 'head_losses': [-10.37, 0.325, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 900, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.838, 'head_losses': [-10.008, 0.17, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 950, 'n_batches': 3125, 'time': 0.012, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.721, 'head_losses': [-9.834, 0.113, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1000, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.902, 'head_losses': [-9.941, 0.039, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1050, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.289, 'head_losses': [-9.971, 0.681, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1100, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.301, 'head_losses': [-10.382, 0.081, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1150, 'n_batches': 3125, 'time': 0.007, 'data_time': 0.005, 'lr': 0.0003, 'loss': -9.825, 'head_losses': [-10.265, 0.441, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1200, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.8, 'head_losses': [-10.093, 0.293, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1250, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.137, 'head_losses': [-10.742, 0.605, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1300, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.552, 'head_losses': [-10.092, 1.54, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1350, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.811, 'head_losses': [-10.09, 0.279, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1400, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.49, 'head_losses': [-9.931, 0.44, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1450, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.549, 'head_losses': [-10.128, 0.579, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1500, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.293, 'head_losses': [-10.414, 0.122, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1550, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.439, 'head_losses': [-10.107, 0.668, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1600, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.727, 'head_losses': [-10.099, 1.372, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1650, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -8.429, 'head_losses': [-10.539, 2.11, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1700, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.199, 'head_losses': [-9.433, 0.234, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1750, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.856, 'head_losses': [-10.45, 0.593, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1800, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.356, 'head_losses': [-9.8, 0.445, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1850, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.115, 'head_losses': [-10.264, 0.149, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1900, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.861, 'head_losses': [-10.41, 0.549, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 1950, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.027, 'head_losses': [-10.096, 0.068, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2000, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.234, 'head_losses': [-10.116, 0.882, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2050, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.792, 'head_losses': [-10.726, 0.934, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2100, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.478, 'head_losses': [-10.683, 0.205, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2150, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.29, 'head_losses': [-10.425, 0.136, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2200, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.573, 'head_losses': [-10.713, 0.14, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2250, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.892, 'head_losses': [-10.111, 0.219, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2300, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.662, 'head_losses': [-9.825, 0.162, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2350, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.349, 'head_losses': [-10.517, 0.168, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2400, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.949, 'head_losses': [-10.293, 0.344, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2450, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.047, 'head_losses': [-10.643, 0.596, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2500, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.537, 'head_losses': [-10.074, 0.536, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2550, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -9.984, 'head_losses': [-10.66, 0.676, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2600, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.148, 'head_losses': [-10.595, 0.447, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2650, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.531, 'head_losses': [-10.566, 0.034, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2700, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.001, 'lr': 0.0003, 'loss': -9.913, 'head_losses': [-10.401, 0.488, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2750, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.503, 'head_losses': [-10.583, 0.08, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2800, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.134, 'head_losses': [-10.178, 0.044, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2850, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.412, 'head_losses': [-10.551, 0.138, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2900, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -10.59, 'head_losses': [-10.73, 0.14, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 2950, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 0.0003, 'loss': -10.608, 'head_losses': [-10.82, 0.212, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 3000, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.159, 'head_losses': [-10.21, 0.051, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 3050, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.258, 'head_losses': [-10.597, 0.338, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 1, 'batch': 3100, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.0003, 'loss': -10.378, 'head_losses': [-10.479, 0.1, 0.0]}

INFO:openpifpaf.network.trainer:applying ema

INFO:openpifpaf.network.trainer:{'type': 'train-epoch', 'epoch': 2, 'loss': -9.74639, 'head_losses': [-10.18281, 0.43642, 0.0], 'time': 34.5, 'n_clipped_grad': 0, 'max_norm': 0.0}

INFO:openpifpaf.network.trainer:model written: cifar10_tutorial.pkl.epoch002

INFO:openpifpaf.network.trainer:training state written: cifar10_tutorial.pkl.optim.epoch002

INFO:openpifpaf.network.trainer:{'type': 'val-epoch', 'epoch': 2, 'loss': -10.62612, 'head_losses': [-10.5011, -0.12502, 0.0], 'time': 5.0}

INFO:openpifpaf.network.trainer:restoring params from before ema

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 0, 'n_batches': 3125, 'time': 0.017, 'data_time': 0.05, 'lr': 0.0003, 'loss': -10.288, 'head_losses': [-10.723, 0.435, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 50, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.00020755, 'loss': -10.553, 'head_losses': [-10.506, -0.047, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 100, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 0.00014359, 'loss': -11.058, 'head_losses': [-10.954, -0.105, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 150, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 9.934e-05, 'loss': -10.849, 'head_losses': [-10.719, -0.129, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 200, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 6.873e-05, 'loss': -11.101, 'head_losses': [-10.977, -0.124, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 250, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 4.755e-05, 'loss': -10.419, 'head_losses': [-10.267, -0.152, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 300, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3.289e-05, 'loss': -10.637, 'head_losses': [-10.503, -0.135, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 350, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-05, 'loss': -9.78, 'head_losses': [-9.653, -0.127, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 400, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.795, 'head_losses': [-10.654, -0.14, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 450, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.49, 'head_losses': [-10.334, -0.155, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 500, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.003, 'lr': 3e-05, 'loss': -11.044, 'head_losses': [-10.914, -0.13, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 550, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-05, 'loss': -11.121, 'head_losses': [-10.955, -0.166, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 600, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-05, 'loss': -11.121, 'head_losses': [-10.954, -0.168, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 650, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.773, 'head_losses': [-10.605, -0.168, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 700, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.494, 'head_losses': [-10.35, -0.144, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 750, 'n_batches': 3125, 'time': 0.012, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.949, 'head_losses': [-10.773, -0.175, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 800, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.443, 'head_losses': [-10.299, -0.144, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 850, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.737, 'head_losses': [-10.581, -0.156, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 900, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.71, 'head_losses': [-10.548, -0.163, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 950, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -11.012, 'head_losses': [-10.855, -0.157, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1000, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.684, 'head_losses': [-10.521, -0.163, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1050, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.003, 'lr': 3e-05, 'loss': -11.022, 'head_losses': [-10.857, -0.165, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1100, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.706, 'head_losses': [-10.534, -0.173, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1150, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.001, 'lr': 3e-05, 'loss': -11.088, 'head_losses': [-10.92, -0.168, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1200, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.374, 'head_losses': [-10.215, -0.159, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1250, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -11.021, 'head_losses': [-10.847, -0.173, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1300, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.131, 'head_losses': [-9.969, -0.162, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1350, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -11.04, 'head_losses': [-10.865, -0.175, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1400, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.584, 'head_losses': [-10.41, -0.174, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1450, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-05, 'loss': -10.639, 'head_losses': [-10.464, -0.175, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1500, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.744, 'head_losses': [-10.578, -0.166, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1550, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-05, 'loss': -10.628, 'head_losses': [-10.467, -0.162, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1600, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 2.276e-05, 'loss': -10.129, 'head_losses': [-9.96, -0.169, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1650, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 1.574e-05, 'loss': -10.207, 'head_losses': [-10.062, -0.144, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1700, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 1.089e-05, 'loss': -10.896, 'head_losses': [-10.717, -0.179, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1750, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 7.54e-06, 'loss': -10.556, 'head_losses': [-10.399, -0.157, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1800, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 5.21e-06, 'loss': -10.56, 'head_losses': [-10.375, -0.185, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1850, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3.61e-06, 'loss': -10.349, 'head_losses': [-10.184, -0.166, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1900, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.813, 'head_losses': [-10.647, -0.166, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 1950, 'n_batches': 3125, 'time': 0.007, 'data_time': 0.002, 'lr': 3e-06, 'loss': -11.139, 'head_losses': [-10.996, -0.142, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2000, 'n_batches': 3125, 'time': 0.012, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.94, 'head_losses': [-10.753, -0.187, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2050, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -11.132, 'head_losses': [-10.947, -0.186, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2100, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.553, 'head_losses': [-10.376, -0.178, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2150, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.803, 'head_losses': [-10.659, -0.143, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2200, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -11.219, 'head_losses': [-11.038, -0.181, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2250, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.669, 'head_losses': [-10.504, -0.166, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2300, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.762, 'head_losses': [-10.593, -0.169, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2350, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.796, 'head_losses': [-10.636, -0.16, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2400, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-06, 'loss': -11.236, 'head_losses': [-11.079, -0.157, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2450, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.855, 'head_losses': [-10.695, -0.16, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2500, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -11.046, 'head_losses': [-10.873, -0.173, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2550, 'n_batches': 3125, 'time': 0.009, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.955, 'head_losses': [-10.763, -0.192, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2600, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-06, 'loss': -11.368, 'head_losses': [-11.18, -0.188, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2650, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -11.277, 'head_losses': [-11.091, -0.186, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2700, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -11.119, 'head_losses': [-10.946, -0.173, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2750, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-06, 'loss': -11.119, 'head_losses': [-10.936, -0.183, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2800, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.183, 'head_losses': [-10.009, -0.174, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2850, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.536, 'head_losses': [-10.371, -0.166, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2900, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.45, 'head_losses': [-10.281, -0.168, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 2950, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.001, 'lr': 3e-06, 'loss': -11.031, 'head_losses': [-10.855, -0.175, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 3000, 'n_batches': 3125, 'time': 0.008, 'data_time': 0.002, 'lr': 3e-06, 'loss': -10.357, 'head_losses': [-10.187, -0.17, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 3050, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.702, 'head_losses': [-10.527, -0.175, 0.0]}

INFO:openpifpaf.network.trainer:{'type': 'train', 'epoch': 2, 'batch': 3100, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.98, 'head_losses': [-10.808, -0.172, 0.0]}

INFO:openpifpaf.network.trainer:applying ema

INFO:openpifpaf.network.trainer:{'type': 'train-epoch', 'epoch': 3, 'loss': -10.71974, 'head_losses': [-10.56505, -0.1547, 0.0], 'time': 34.6, 'n_clipped_grad': 0, 'max_norm': 0.0}

INFO:openpifpaf.network.trainer:model written: cifar10_tutorial.pkl.epoch003

INFO:openpifpaf.network.trainer:training state written: cifar10_tutorial.pkl.optim.epoch003

INFO:openpifpaf.network.trainer:{'type': 'val-epoch', 'epoch': 3, 'loss': -10.76633, 'head_losses': [-10.59546, -0.17087, 0.0], 'time': 5.1}

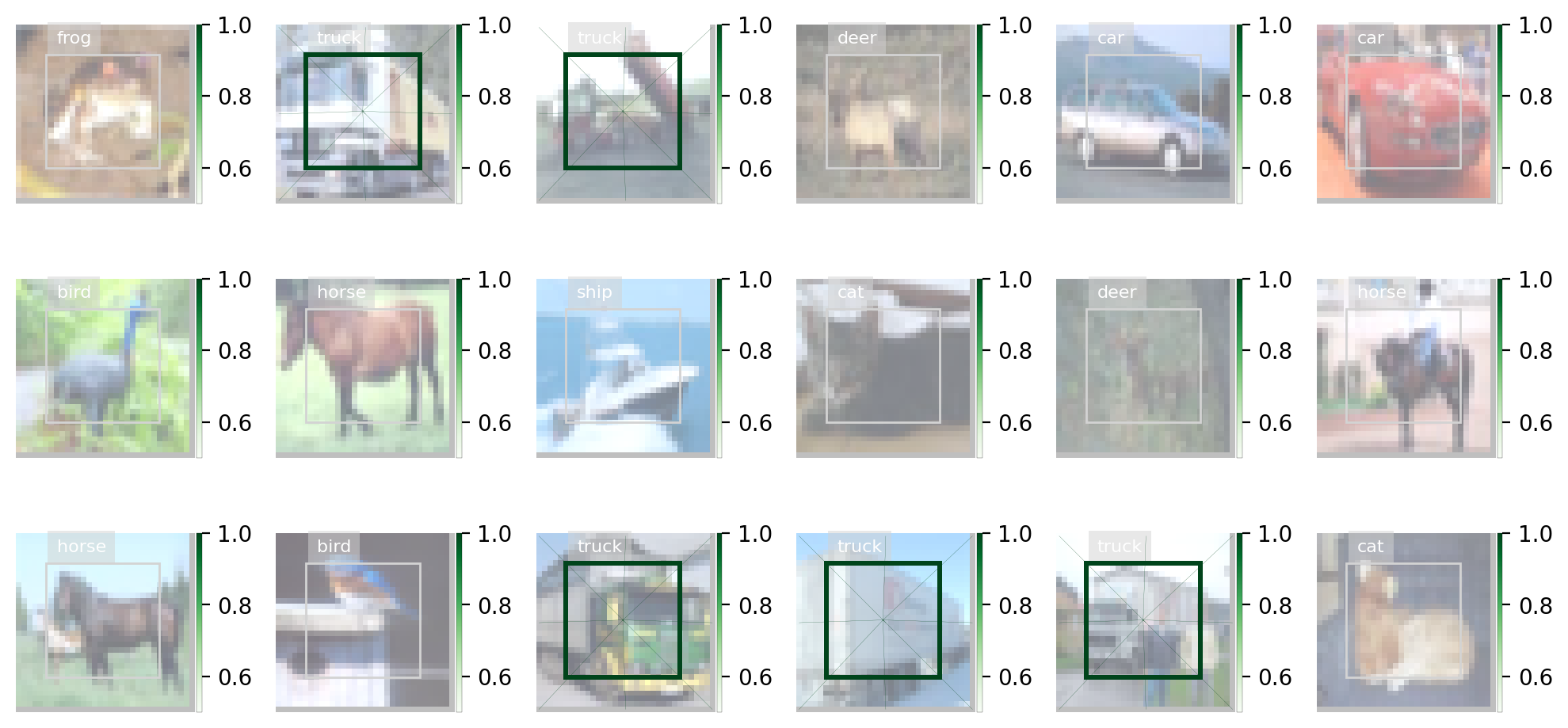

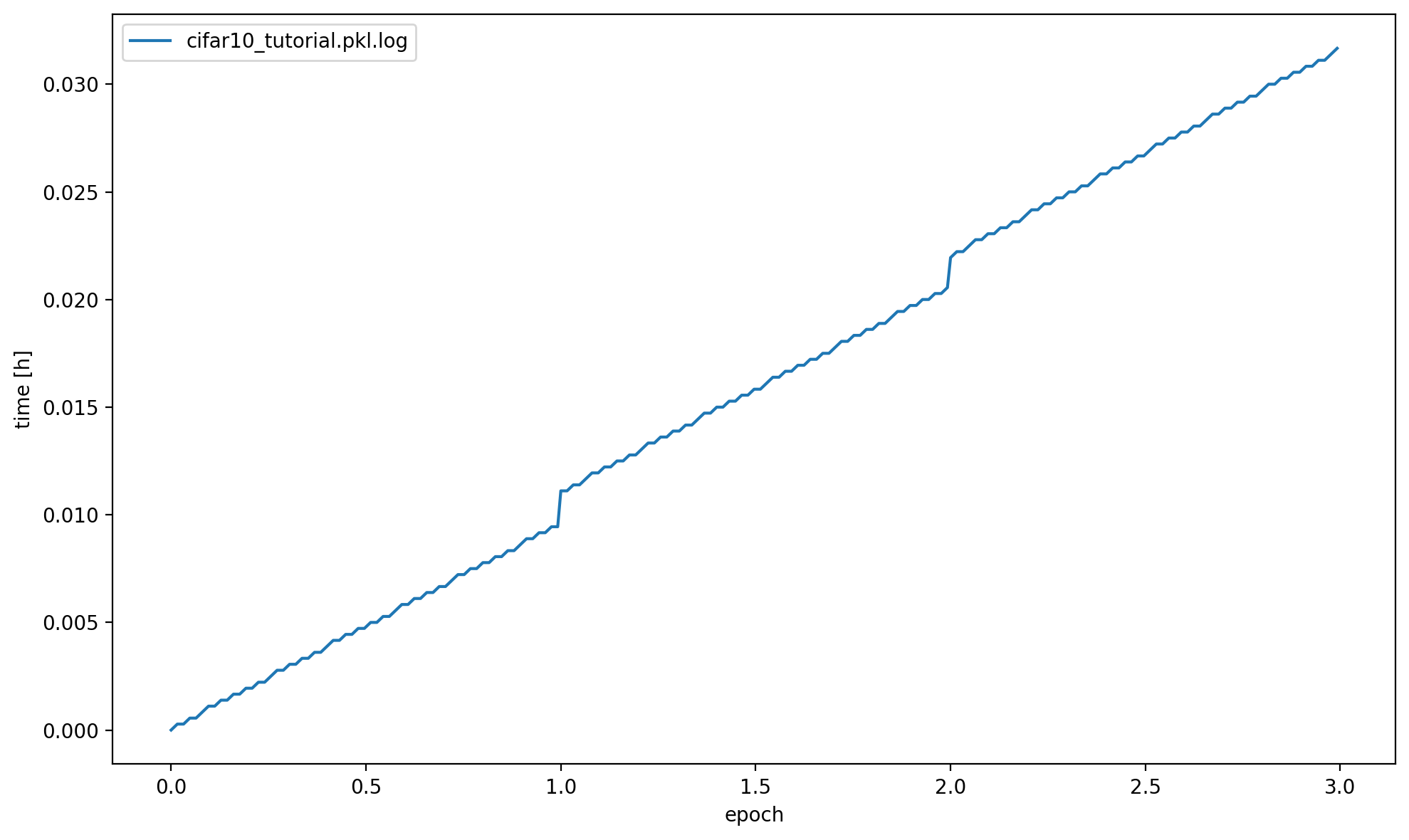

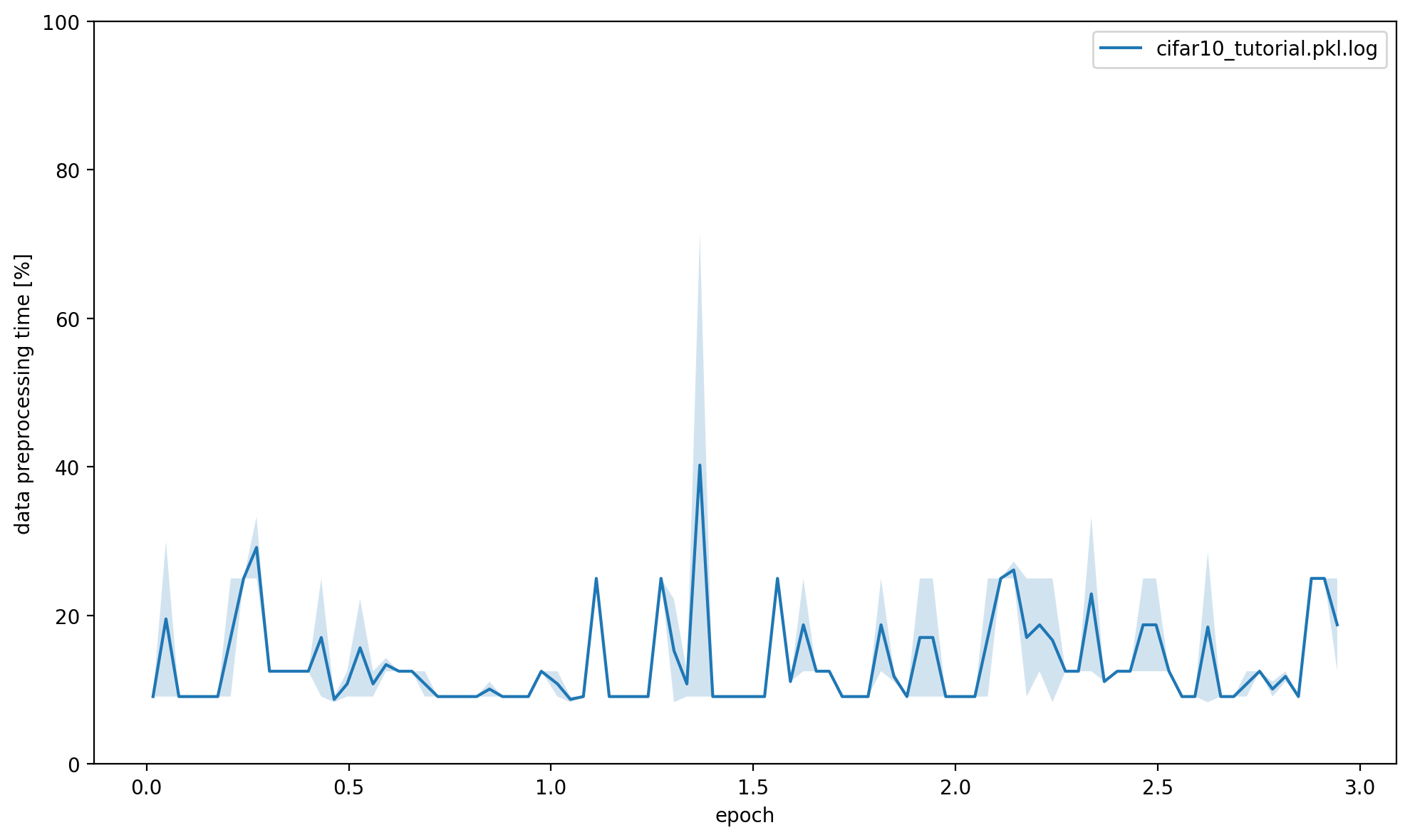

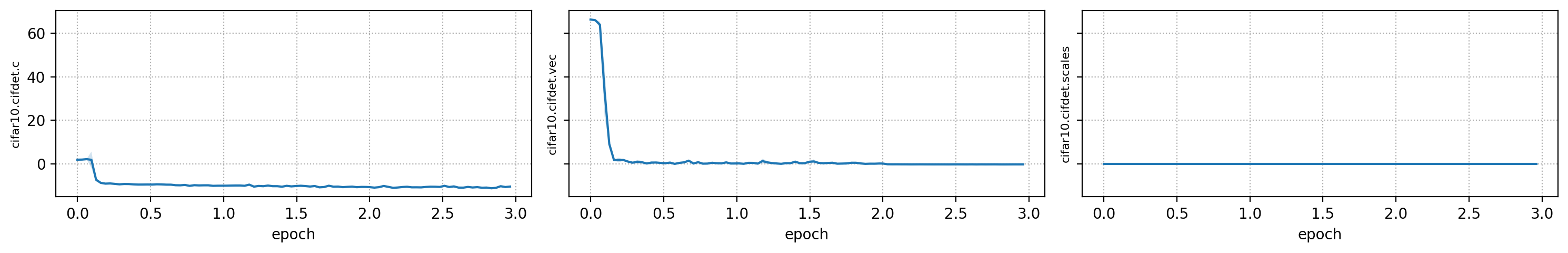

Plot Training Logs#

You can create a set of plots from the command line with python -m openpifpaf.logs cifar10_tutorial.pkl.log. You can also overlay multiple runs. Below we call the plotting code from that command directly to show the output in this notebook.

openpifpaf.logs.Plots(['cifar10_tutorial.pkl.log']).show_all()

{'cifar10_tutorial.pkl.log': ['--dataset=cifar10',

'--basenet=cifar10net',

'--log-interval=50',

'--epochs=3',

'--lr=0.0003',

'--momentum=0.95',

'--batch-size=16',

'--lr-warm-up-epochs=0.1',

'--lr-decay',

'2.0',

'2.5',

'--lr-decay-epochs=0.1',

'--loader-workers=2',

'--output=cifar10_tutorial.pkl']}

cifar10_tutorial.pkl.log: {'message': '', 'levelname': 'INFO', 'name': 'openpifpaf.network.trainer', 'asctime': '2024-03-14 13:54:01,067', 'type': 'train', 'epoch': 2, 'batch': 3100, 'n_batches': 3125, 'time': 0.011, 'data_time': 0.001, 'lr': 3e-06, 'loss': -10.98, 'head_losses': [-10.808, -0.172, 0.0]}

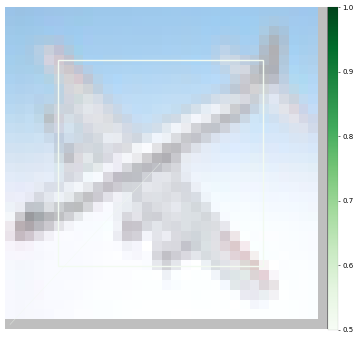

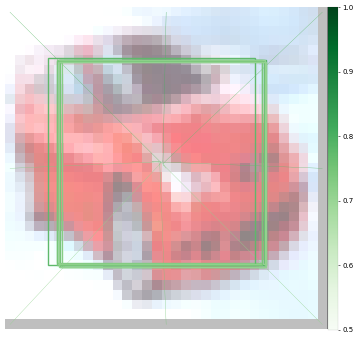

Prediction#

First using CLI:

%%bash

python -m openpifpaf.predict --checkpoint cifar10_tutorial.pkl.epoch003 images/cifar10_*.png --seed-threshold=0.1 --json-output . --quiet

WARNING:openpifpaf.decoder.cifcaf:consistency: decreasing keypoint threshold to seed threshold of 0.100000

%%bash

cat cifar10_*.json

[{"category_id": 1, "category": "plane", "score": 0.414, "bbox": [5.15, 5.06, 21.02, 21.05]}, {"category_id": 9, "category": "ship", "score": 0.37, "bbox": [5.08, 4.97, 21.0, 21.0]}, {"category_id": 3, "category": "bird", "score": 0.329, "bbox": [5.11, 5.02, 21.04, 21.02]}, {"category_id": 5, "category": "deer", "score": 0.265, "bbox": [4.99, 5.07, 21.01, 21.04]}, {"category_id": 4, "category": "cat", "score": 0.217, "bbox": [4.98, 5.06, 21.02, 21.02]}, {"category_id": 6, "category": "dog", "score": 0.212, "bbox": [5.06, 5.0, 20.98, 21.07]}, {"category_id": 8, "category": "horse", "score": 0.207, "bbox": [4.93, 4.93, 21.03, 20.96]}, {"category_id": 10, "category": "truck", "score": 0.202, "bbox": [4.88, 4.86, 21.08, 21.14]}][{"category_id": 2, "category": "car", "score": 0.48, "bbox": [4.18, 4.83, 21.16, 21.17]}, {"category_id": 10, "category": "truck", "score": 0.442, "bbox": [3.92, 4.69, 21.21, 21.25]}, {"category_id": 9, "category": "ship", "score": 0.201, "bbox": [5.14, 5.0, 21.2, 21.02]}][{"category_id": 1, "category": "plane", "score": 0.361, "bbox": [5.1, 5.05, 20.99, 21.01]}, {"category_id": 9, "category": "ship", "score": 0.355, "bbox": [4.89, 4.89, 21.13, 21.02]}, {"category_id": 10, "category": "truck", "score": 0.303, "bbox": [5.14, 5.01, 20.98, 21.05]}, {"category_id": 2, "category": "car", "score": 0.272, "bbox": [4.94, 4.81, 21.0, 21.09]}, {"category_id": 3, "category": "bird", "score": 0.27, "bbox": [5.14, 5.14, 20.93, 21.12]}, {"category_id": 4, "category": "cat", "score": 0.213, "bbox": [5.03, 4.99, 20.93, 20.97]}, {"category_id": 5, "category": "deer", "score": 0.186, "bbox": [5.08, 5.0, 20.94, 20.98]}, {"category_id": 8, "category": "horse", "score": 0.177, "bbox": [5.02, 5.01, 20.93, 20.95]}, {"category_id": 6, "category": "dog", "score": 0.172, "bbox": [4.97, 4.98, 21.0, 21.01]}][{"category_id": 10, "category": "truck", "score": 0.361, "bbox": [5.01, 4.97, 21.07, 20.98]}, {"category_id": 2, "category": "car", "score": 0.319, "bbox": [5.06, 5.09, 20.99, 21.0]}, {"category_id": 8, "category": "horse", "score": 0.303, "bbox": [4.95, 4.92, 20.97, 21.01]}, {"category_id": 4, "category": "cat", "score": 0.302, "bbox": [4.84, 5.0, 21.04, 21.05]}, {"category_id": 9, "category": "ship", "score": 0.286, "bbox": [4.97, 5.0, 20.99, 20.98]}, {"category_id": 6, "category": "dog", "score": 0.283, "bbox": [4.84, 5.13, 21.02, 20.88]}, {"category_id": 1, "category": "plane", "score": 0.272, "bbox": [4.96, 4.96, 21.04, 21.0]}, {"category_id": 3, "category": "bird", "score": 0.254, "bbox": [4.85, 4.99, 21.05, 21.04]}, {"category_id": 7, "category": "frog", "score": 0.223, "bbox": [5.01, 5.12, 21.03, 20.93]}, {"category_id": 5, "category": "deer", "score": 0.219, "bbox": [4.95, 4.78, 20.99, 21.1]}]

Using API:

net_cpu, _ = openpifpaf.network.Factory(checkpoint='cifar10_tutorial.pkl.epoch003').factory()

preprocess = openpifpaf.transforms.Compose([

openpifpaf.transforms.NormalizeAnnotations(),

openpifpaf.transforms.CenterPadTight(16),

openpifpaf.transforms.EVAL_TRANSFORM,

])

openpifpaf.decoder.utils.CifDetSeeds.set_threshold(0.3)

decode = openpifpaf.decoder.factory([hn.meta for hn in net_cpu.head_nets])

data = openpifpaf.datasets.ImageList([

'images/cifar10_airplane4.png',

'images/cifar10_automobile10.png',

'images/cifar10_ship7.png',

'images/cifar10_truck8.png',

], preprocess=preprocess)

for image, _, meta in data:

predictions = decode.batch(net_cpu, image.unsqueeze(0))[0]

print(['{} {:.0%}'.format(pred.category, pred.score) for pred in predictions])

['plane 41%', 'ship 37%', 'bird 33%']

['car 48%', 'truck 44%']

['plane 36%', 'ship 36%', 'truck 30%']

['truck 36%', 'car 32%', 'horse 30%', 'cat 30%']

Evaluation#

I selected the above images, because their category is clear to me. There are images in cifar10 where it is more difficult to tell what the category is and so it is probably also more difficult for a neural network.

Therefore, we should run a proper quantitative evaluation with openpifpaf.eval. It stores its output as a json file, so we print that afterwards.

%%bash

python -m openpifpaf.eval --checkpoint cifar10_tutorial.pkl.epoch003 --dataset=cifar10 --seed-threshold=0.1 --instance-threshold=0.1 --quiet

WARNING:openpifpaf.decoder.cifcaf:consistency: decreasing keypoint threshold to seed threshold of 0.100000

[INFO] Register count_convNd() for <class 'torch.nn.modules.conv.Conv2d'>.

%%bash

python -m json.tool cifar10_tutorial.pkl.epoch003.eval-cifar10.stats.json

{

"text_labels": [

"total",

"plane",

"car",

"bird",

"cat",

"deer",

"dog",

"frog",

"horse",

"ship",

"truck"

],

"stats": [

0.4006,

0.44,

0.66,

0.144,

0.241,

0.342,

0.352,

0.438,

0.478,

0.541,

0.37

],

"args": [

"/opt/hostedtoolcache/Python/3.10.13/x64/lib/python3.10/site-packages/openpifpaf/eval.py",

"--checkpoint",

"cifar10_tutorial.pkl.epoch003",

"--dataset=cifar10",

"--seed-threshold=0.1",

"--instance-threshold=0.1",

"--quiet"

],

"version": "0.14.2",

"dataset": "cifar10",

"total_time": 22.35475206700005,

"checkpoint": "cifar10_tutorial.pkl.epoch003",

"count_ops": [

421736880.0,

105180.0

],

"file_size": 438894,

"n_images": 10000,

"decoder_time": 7.497657569998182,

"nn_time": 6.1505197100049145

}

We see that some categories like “plane”, “car” and “ship” are learned quickly whereas as others are learned poorly (e.g. “bird”). The poor performance is not surprising as we trained our network for a few epochs only.